Hadoop came in as a big data revolution. People interpreted Hadoop as Big Data. If some has to be working on Big Data then the answer was only Hadoop.

What is Hadoop?

Hadoop aims to be an open-source software for

reliable, scalable, distributed computing.

The Apache Hadoop software library is a framework that

allows for the distributed processing of large data sets across clusters

of computers using simple programming models. It is designed to scale up

from single servers to thousands of machines, each offering local

computation and storage. Rather than rely on hardware to deliver

high-availability, the library itself is designed to detect and handle

failures at the application layer, so delivering a highly-available

service on top of a cluster of computers, each of which may be prone to

failures. So in summary, Hadoop is a low cost server farm with cheap servers and cheap software ready for high scalability.

Hadoop is being distributed by many companies such as Amazon Web Services, Cloudera, CloudSpace, Datameer, Datamine, Datasalt, Datastax, Debian, Greenplum , Hortonworks, Hstream, IBM, Impetus, Intel, MapR etc

Is Hadoop technology completely new?

I do not think so. There has been several of those enterprise applications that were proprietary software that existed to solve such reliable, scalable and distributed computing. For web processing, it used to be called as Load balancing servers that used to run scaling out technology for years since the scale of internet happened. Similarly, in the enterprise applications such as SAP, it used to call as Application Server and the Master Gateway server to span out the processing capability. Many of the software companies built solutions to run on the GRID.

History of Linux

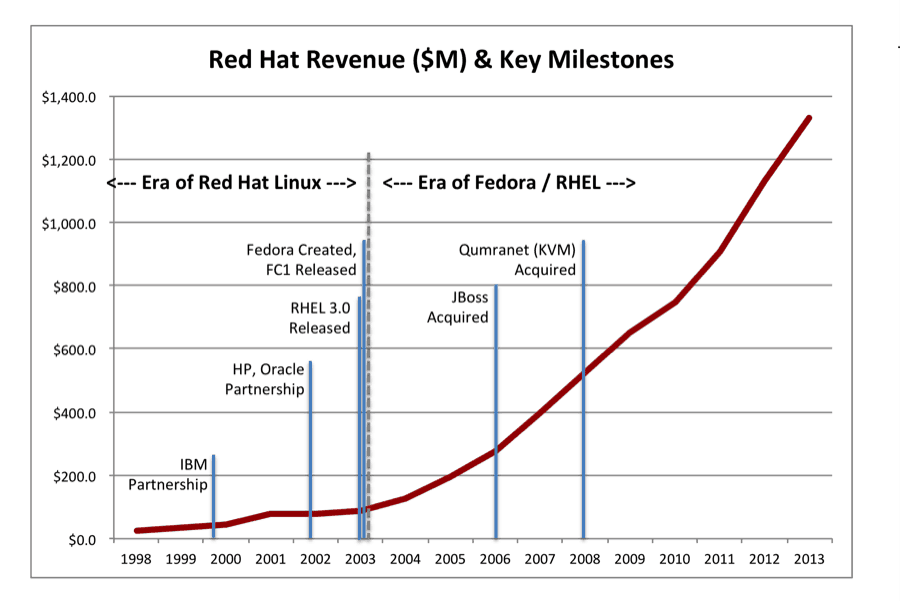

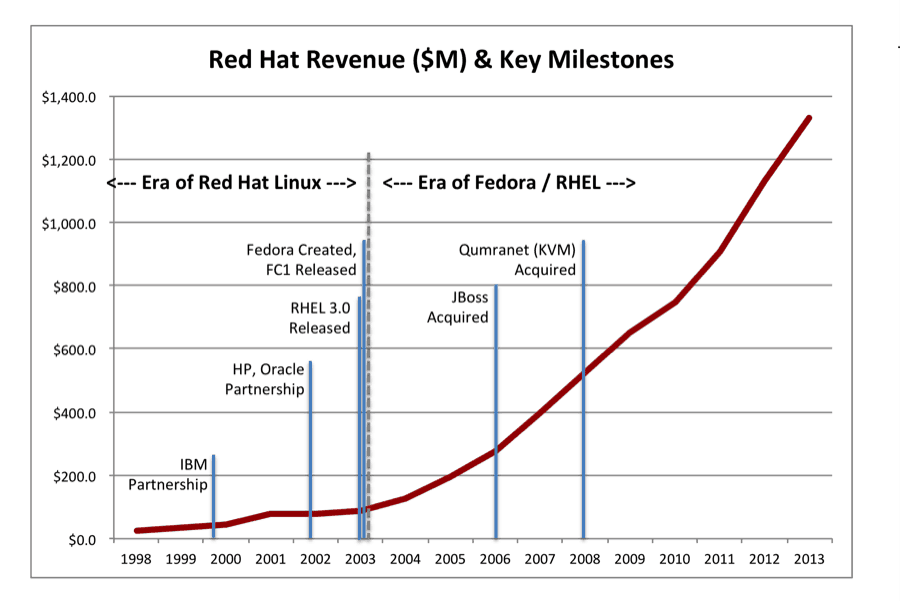

I have been in the IT industry since mid 1990's. This reminded me of an old wave that came out with Linux Torvalds way back in the mid 90's which mentioned as the open source paradigm shift that should happen to compete with the Microsoft monopoly. There were several tens of companies that took over the linux bandwagon which includes Debian, Fedora, Suse, Gentoo, Slackware etc. Some of them had the following commerical names such as Redhat, Suse, Mandrake, Ubuntu, Oracle etc. So who benefitted out of this? It is the hardware companies such as IBM, HP, Dell, Intel, AMD etc who could create more chips to create new operating systems and hardwares to sell to customers who did not like the monopoly of Microsoft. The companies such as IBM, HP and Oracle (earlier Sun), even though they had their own operating systems known as AIX, HP-UX and Solaris respectively, they started supported Linux to expand their business. So lets see who made money with Linux and who lost money with Linux. The hardware companies and the Linux distribution companies made money where as the enterprises or software companies lost money in developing their exisiting software solutions that were already built on AIX, HP-UX, Solaris and Windows needed to be ported to yet another 32 and 64 bit Linux OS. The cost of porting and certification was very high since many of these distributions such as RHEL 5.1, 5.2, 5.3 and similar Suse 9.1, 9.2, Mandrake xx..yy has to be tested and certified. So many of the customers who chose to pick any of the non-common Linux distributions could not get such certificated enterprise software.

Relationship with Linux and Hadoop

I find a close relationship with the Linux and Hadoop. I believe that Hadoop is the new Linux of 2010's which is ready to break away the propitiatory software GRID solutions that were offered in a free software manner.

After 15 years of Linux history, there are only a few companies that are now considered successful, which is Redhat and Suse in the enterprise OS space .

Future of Hadoop

So what is the future of Hadoop, it will be the same future that happened to Linux. Few of the distribution companies many money and majority of the distribution companies would die. As I see one of the Hadoop distribution (distro) companies is following the Linux path which is the Hortonworks. The other strong close contenders are Cloudera and MapR in addition to the direct software vendors such as Greenplum and IBM.

References:

1) History of Linux

http://en.wikipedia.org/wiki/History_of_Linux

2) Linux Distributions

http://en.wikipedia.org/wiki/Linux_distribution

3) Hadoop Distributions

http://wiki.apache.org/hadoop/Distributions%20and%20Commercial%20Support

4) Hortonworks partnership with SAP

5) Hortonworks partnership with Redhat and SAP